Artificial Intelligence (AI) advances are happening more quickly and with more powerful results. Powerful AI tools like ChatGPT are being used to write malware, term papers, and developer code, without the social norms available to govern our behaviors or the laws we need to protect us from harm. Technology often outstrips the social norms that govern its use especially when major innovations occurs. Think briefly about texting and driving; it took a decade or more for laws to be written banning texting while driving. The world sent 250 Billion texts way back in 2002 (Source: WSJ). [Have we convinced people of the dangers of texting and driving even today?]

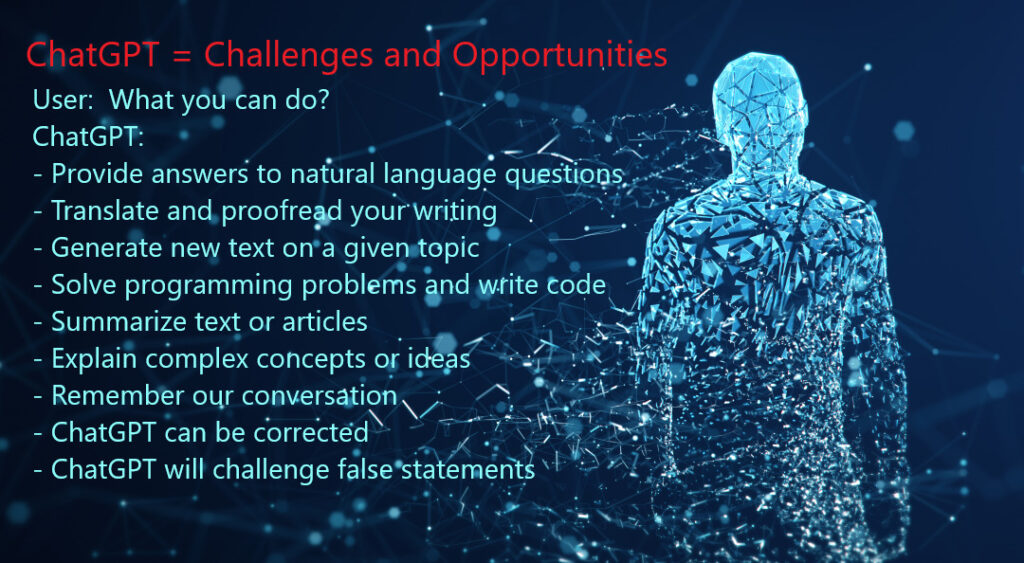

This article explains what ChatGPT is, some of what it can do, and asks important questions about the opportunities and challenges we face from it.

CyberHoot cybersecurity library of tech terms defines ChatGPT as a conversational chat bot that leverages “Generative Pre-Trained Transformer” (“GPT”) AI algorithms. ChatGPT can converse with users in a grammatically correct way, even correcting the grammar of others. It can write and troubleshoot programmers code. It has a conversation memory and can reference past dialogue from the current conversation. It admits mistakes and challenges incorrect assumptions. It can write lyrics to a song. Given a complex topic it can summarize the main points in natural language in ways students can use for term papers. Summarizing a complex subject for better comprehension is something 25% of students surveyed recently admitted to doing. While that may be completely ethical, the same study showed 5% of students using AI to write their papers.

Back in 2019, Microsoft invested heavily ($1 Billion) in Open AI presumably in the hopes of creating a more competitive landscape for search engines by building the ChatGPT language model into BING. Numerous sources have claimed BING could have ChatGPT searches released as soon as March of 2023. According to this article from TheWeek.Co.Uk:

“Generative AI is being hailed as the next era-defining technological innovation, changing how we create new content online or even experience the internet…“

As generative AI technology improves fake news and biased opinions will become more difficult to identify. [What will be our source(s) of truth]

CyberHoot’s attention found articles surfaced stating hackers are using ChatGPT to write malware code such as ransomware and phishing attacks. OpenAI responded to such criticisms by citing their Acceptable Use terms and conditions that prohibit using ChatGPT for “any kind of harm”, and is investigating adding watermarks on the output of its AI engine. Multiple articles from security researchers are now claiming that hackers have coopted ChatGPT to write malware code despite the checks and balances built into the tool.

So where does this leave us? What does this all mean to the average SMB owner or employee?

Personally or professionally, the answer seems the same to CyberHoot. Internet content is going to become increasingly sourced from Generated AI systems for human consumption. As capabilities improve with training, consumers may begin to lose faith in some of our Institutions such as media, or the government. If we begin to lose faith in our more trustworthy institutions of educational or our legal/judicial system, then we will face a serious and daunting challenge.

We must have a reasonable, rational, and easy way to separate fact from fiction. In a generative AI future, your common sense won’t be enough to distinguish one from the other. This is no different than how Deepfakes are improving to the point where the technology can make anyone say anything.

In an age where organic or GMO free foods must be legally labeled as such, CyberHoot wonders if we need communication labels that are independently verified as “Generative AI free” in origin? Or is that missing the point of this Technology? To create meaningful, consumable content, indistinguishable from human created content? Will Avatar 10 through 15 be wholly generated by the latest generative AI movie studio?

Yaakov J. Stein, chief technology officer of RAD Data Communications, based in Israel, answers the question:

“The problem with AI and machine learning is not the sci-fi scenario of AI taking over the world and not needing inferior humans. The problem is that we are becoming more and more dependent on machines and hence more susceptible to bugs and system failures. This is hardly a new phenomenon – once a major part of schooling was devoted to, e.g., penmanship and mental arithmetic, which have been superseded by technical means. But with the tremendous growth in the amount of information, education is more focused on how to retrieve required information rather than remembering things, resulting not only in less actual storage but less depth of knowledge and the lack of ability to make connections between disparate bits of information, which is the basis of creativity. However, in the past humankind has always developed a more-advanced technology to overcome limitations of whatever technology was current, and there is no reason to believe that it will be different this time.”

Source: Pew Research: Tech Causes more Problems than it Solves

Sources:

The Guardian: Microsoft is building ChatGPT into its BING search Engine

ZDNet: People are Trying to Get ChatGPT to write Malware

Reuters: Microsoft Planning an AI Version of Bing powered by ChatGPT

Additional Reading:

Pew Research 6/30/2020: Tech Causes more Problems than it Solves – a Selection of Answers For and Against the Premise

Discover and share the latest cybersecurity trends, tips and best practices – alongside new threats to watch out for.

New benchmark data names MDASH and Claude Mythos Preview are the top AI agents finding zero-day vulnerabilities...

Read more

One Forgotten Password, Almost a Catastrophe A single Windows machine at a retail store location had a cached...

Read more

You now have five important reasons to start a router security conversation with your small business clients this...

Read moreGet sharper eyes on human risks, with the positive approach that beats traditional phish testing.